m (1 revision(s)) |

No edit summary |

||

| (13 intermediate revisions by 4 users not shown) | |||

| Line 1: | Line 1: | ||

{{header|infra}} | |||

= | = Global Presence = | ||

Fedora Infrastructure Network spans multiple continents. Datacenters are present in North America, UK and Germany. List of Datacenters goes as follows: | |||

# phx2 - main Datacenter in Phoenix, AZ, USA | |||

# rdu - North Carolina, USA | |||

# tummy - Colorado, USA | |||

# serverbeach - San Antonio, TX, USA | |||

# telia - Germany | |||

# osuosl - Oregon, USA | |||

# bodhost - UK | |||

# ibiblio - North Carolina, USA | |||

# internetx - Germany | |||

# colocation america - LA, USA | |||

= Network Topology = | |||

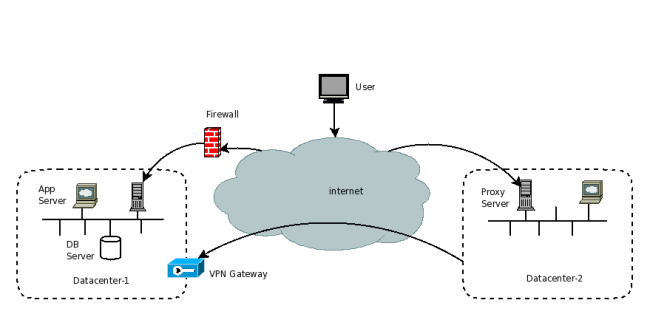

This section shows how our severs are interconnected or connected to the outside world. | |||

= | [[Image:FINTopology.png|border|thumb|center|650px|alt=Infrastructure Network Topology|Infrastructure Network Topology]] | ||

= Network Architecture = | |||

Following diagram shows overall network architecture. [https://fedoraproject.org/ fedoraproject.org] and [http://fedoraproject.org/wiki/Infrastructure/Services admin.fedoraproject.org] are round robin <code>DNS</code> entries. They are populated based on geoip information. For example, for North America they get a pool of servers in North America. Each of those servers in <code>DNS</code> is a proxy server. It accepts connections using <code>Apache</code>. <code>Apache</code> uses <code>HAProxy</code> as a backend, and in turn some(but not all) services use <code>varnish</code> for caching. Requests are replied to from cache if <code>varnish</code> has it cached, otherwise it sends into a backend application server. Many of these are in the main datacenter in phx2 and some are at other sites. The application server processes the request and sends it back. | |||

[[Image:FINArchitecture.png|border|thumb|center|650px|alt=Infrastructure Network Architecture|Infrastructure Network Architecture]] | |||

== Proxy View == | == Proxy View == | ||

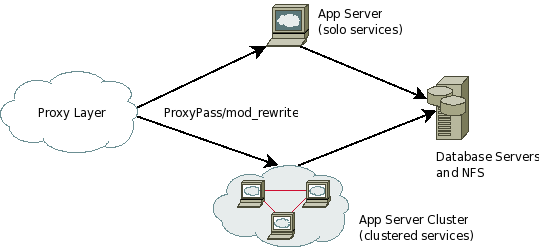

This shows whats going on in the proxies. | This shows whats going on in the proxies. Incoming <code>DNS</code> balanced user application requests hits <code>Apache httpd</code> in proxy server. Apache forwards request to <code>HAProxy</code>, which load balances requests over the app servers. Some of them reaches over <code>VPN</code>. An example of external source is [http://www.fedoraproject.org/people/ fedoraproject.org/people/] which is a proxy pass to [http://people.fedoraproject.org/ people.fedoraproject.org] hosted at Duke. In some cases there is also <code>varnish</code> between <code>HAProxy</code> and the app servers to help cache information. Local requests use standard alias in the <code>apache configs</code>. | ||

[[Image:FINProxyLayer.png|border|thumb|center|650px|alt=Infrastructure Proxy Server Flow Chart|Infrastructure Proxy Server Flow Chart]] | |||

[ | |||

== Application Layer == | == Application Layer == | ||

This is a generic view of how our applications work. | This is a generic view of how our applications work. Each application may have its own design, but the premise is the same. Incoming requests are load-balanced from proxy server and reaches to appropriate service box. All application servers in the clustered services area must be identical. If an exception is made it must get moved to solo services box. Most solo services will be one-offs or proof of concept(test) services. Most commonly our single point of failure lie in the data layer. | ||

[[Image:FINApplicationLayer.png|border|thumb|center|650px|alt=Infrastructure Application Layer Diagram|Infrastructure Application Layer Diagram]] | |||

[[Image: | |||

= Contributing = | |||

One can contribute to Fedora Infrastructure in several ways. If you are looking to improve the quality of content in this page then have a look at [https://fedoraproject.org/wiki/Infrastructure/GettingStarted GettingStarted]. And if you are wondering why no server in a great country like yours and want to make donations of hardware please visit our [https://fedoraproject.org/wiki/Donations donations] and [http://fedoraproject.org/sponsors sponsors] page. | |||

[[Category:Infrastructure]] | [[Category:Infrastructure]] | ||

Revision as of 16:39, 3 October 2012

Global Presence

Fedora Infrastructure Network spans multiple continents. Datacenters are present in North America, UK and Germany. List of Datacenters goes as follows:

- phx2 - main Datacenter in Phoenix, AZ, USA

- rdu - North Carolina, USA

- tummy - Colorado, USA

- serverbeach - San Antonio, TX, USA

- telia - Germany

- osuosl - Oregon, USA

- bodhost - UK

- ibiblio - North Carolina, USA

- internetx - Germany

- colocation america - LA, USA

Network Topology

This section shows how our severs are interconnected or connected to the outside world.

Network Architecture

Following diagram shows overall network architecture. fedoraproject.org and admin.fedoraproject.org are round robin DNS entries. They are populated based on geoip information. For example, for North America they get a pool of servers in North America. Each of those servers in DNS is a proxy server. It accepts connections using Apache. Apache uses HAProxy as a backend, and in turn some(but not all) services use varnish for caching. Requests are replied to from cache if varnish has it cached, otherwise it sends into a backend application server. Many of these are in the main datacenter in phx2 and some are at other sites. The application server processes the request and sends it back.

Proxy View

This shows whats going on in the proxies. Incoming DNS balanced user application requests hits Apache httpd in proxy server. Apache forwards request to HAProxy, which load balances requests over the app servers. Some of them reaches over VPN. An example of external source is fedoraproject.org/people/ which is a proxy pass to people.fedoraproject.org hosted at Duke. In some cases there is also varnish between HAProxy and the app servers to help cache information. Local requests use standard alias in the apache configs.

Application Layer

This is a generic view of how our applications work. Each application may have its own design, but the premise is the same. Incoming requests are load-balanced from proxy server and reaches to appropriate service box. All application servers in the clustered services area must be identical. If an exception is made it must get moved to solo services box. Most solo services will be one-offs or proof of concept(test) services. Most commonly our single point of failure lie in the data layer.

Contributing

One can contribute to Fedora Infrastructure in several ways. If you are looking to improve the quality of content in this page then have a look at GettingStarted. And if you are wondering why no server in a great country like yours and want to make donations of hardware please visit our donations and sponsors page.