<DRAFT>

Fedora Cloud

This page is has been setup to track down what is actually going on our fedora cloud test instance which is running on xen6 box for now. It will also a start page about what we need to do, what work need to be done.

Overview

technologies we'll use

- Virtualization : KVM

- Platform management : Ovirt (http://ovirt.org)

- Storage : ISCSI share

- Disk management : LVM

- OS provisioning : Cobbler

Cloud instance

Currently, the cloud instance is running under a ovirt-appliance provided by the ovirt team.

We need to know how we will deploy the cloud instance in fedora infrastructure.

- Use configured ovirt-appliance (just like the test instance)

- Deploy and manage all apps that ovirt uses (cobbler, collectd, db, etc)

i'm for the second choice.

Hardware

The nodes will be diskless 16G RAM, 2X Quad Core 1U x3550's. The storage nodes will be 2U boxes of a similar type.

Network

For the initial rollout there will be two networks.

First network will be connected via an external IP pool space of about 80 IP's (No eta on this yet).

The second network will be a combined storage and management network. It'll be in private IP space (10.something). This is where the storage network will be as well as overall management. We only have one switch for the initial rollout, vlan will take care of the rest.

For future rollouts we may add an additional switch, use multipath, etc.

Temporary repo

Redhat

- rhel_i386

- rehel_x86_64 (not yet)

Fedora

- fc10 (not yet) :: Ovirt repo can be use for at this time.

- fc11 (not yet) :: Ovirt repo should do the trick as well.

oVIRT

Puppet Files

You will find below all files which need to be puppet managed.

# In /puppet/config/cloud /etc/ovirt-server/database.yml /etc/ovirt-server/db /etc/ovirt-server/development.rb /etc/ovirt-server/production.rb /etc/ovirt-server/test.rb /etc/sysconfig/ovirt-mongrel-rails /etc/sysconfig/ovirt-rails

# In /puppet/config/web /etc/httpd/conf.d/ovirt-server.conf

[more]

Authentication (web access)

The default authentication for ovirt is handles by krb5 through LDAP database (it's also includes a IPA instance). It's not the way we want to follow.

Fedora people should be able to log in through their FAS account (or trusted CA ?).

Now i'm wondering if we'll follow that way which will imply to hack a bit ovirt (e.g don't let fedora people see Redhat pool through the web interface) or just handle a trac instance where they could request a virtual machine or again, by apply to a new-cloud-specific group with additional tools which will handle vms.

- Current ETA:

I currently setup the web interface to deals with Apache Authentication.

The password file is stored in /srv

User and Permissions management

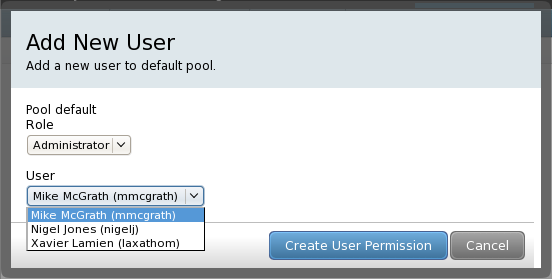

Ovirt fetch only registered LDAP users in the pop-up window.

As we use FAS2, we'll make ovirt to deal with FAS account to have something like this

through the webUI.

Here is a capture of the result after hacked the code a bit :

Now, users in that list can only be approved or administrator members from the sysadmin-cloud

group (which has been created for the cloud instance).

As said above, the web access could be forbidden for fedora standard people.

[to edit]

Hosts management (ovirt nodes)

Ovirt is able to manage different hosts from different places but can't be shared between hardware pools.

Hosts informations are indexes and stored in its database (table Host).

From all i know, you cannot register any hosts from the web interface.

You only able to add hosts from available registered hosts to [hardware|virtual|smart] pools or anythings else you would like ot dream about.

- How to register hosts

Packages requirement:

ovirt-node ovirt-node-selinux ovirt-node-statefull libvirt-qpid ## libvirt connector for Qpid and QMF which are used by ovirt-server collectd-virt ## libvirt plugin for collectd

Auth requirement:

You need to register your hosts by generating the authentication file via ovirt-add-host shell-script.

Then load this file to authenticate your host.

This part should be move out or bind to generated CA.

Puppet files:

/etc/collecd.conf ## from where you will add ovirt-server-side and libvirtd infos /etc/libvirt/qemu.conf /etc/sasl2/libvirt.conf /etc/sysconfig/libvirt-qpid ## from where you'll add ovirt-server side information (host + port num)

iptables issues:

Default Port num which will need to be open

7777:tcp ## for ovirt-listen-awake daemon (tells to ovirt-server that the node is available) 16509:tcp ## for libvirtd daemon 5900-6000:tcp ## vnc connection from client-side 49152-49216:tcp ## for libvirt migration

Host-side registration (/var/log/ovirt.log)

Starting wakeup conversation. Retrieving keytab: 'http://management.priv.ovirt.org/ipa/config/192.168.50.3-libvirt.tab' Disconnecting. Sending oVirt Node details to server. Finished!

Storage management

For its initial rollout storage will be done on two servers each with 4T of usable space. One will be master and export its data via iscsi to the nodes. The other will be a secondary storage (replicated to via iscsi and software raid). This is not an HA setup but is a data redundancy setup so if one fails, we won't lose any data.

Pools management

[to edit]

Smart Pools

I don't need to talk about what is a pool, i mean for now.

We will need to have different pools to dissociate the usage.

So, a pool to handle all redhat VM, one for fedora people, another for specific user and so on.

- Current ETA:

I just start create smart pool. If you see the same pool more than once don't worry about that.

it's just lynks which screwed it up when i did my tries.

I'll need a good web access to go forward, lynx is very limited to work on ovirt webUI.

Cobbler

Cobbler is the way where ovirt handles OS provisioning and profile management.

We'll need to prevent from people question such as :

Could we request a specific profile for our vm or it's just up to us ?

Authentication

We'll also need to bind it to FAS

</DRAFT>